Loss Functions in Deep Learning

📑 5 slides

👁 55 views

📅 2/27/2026

Introduction to Loss Functions

Loss functions measure how well a model's predictions match actual data

2

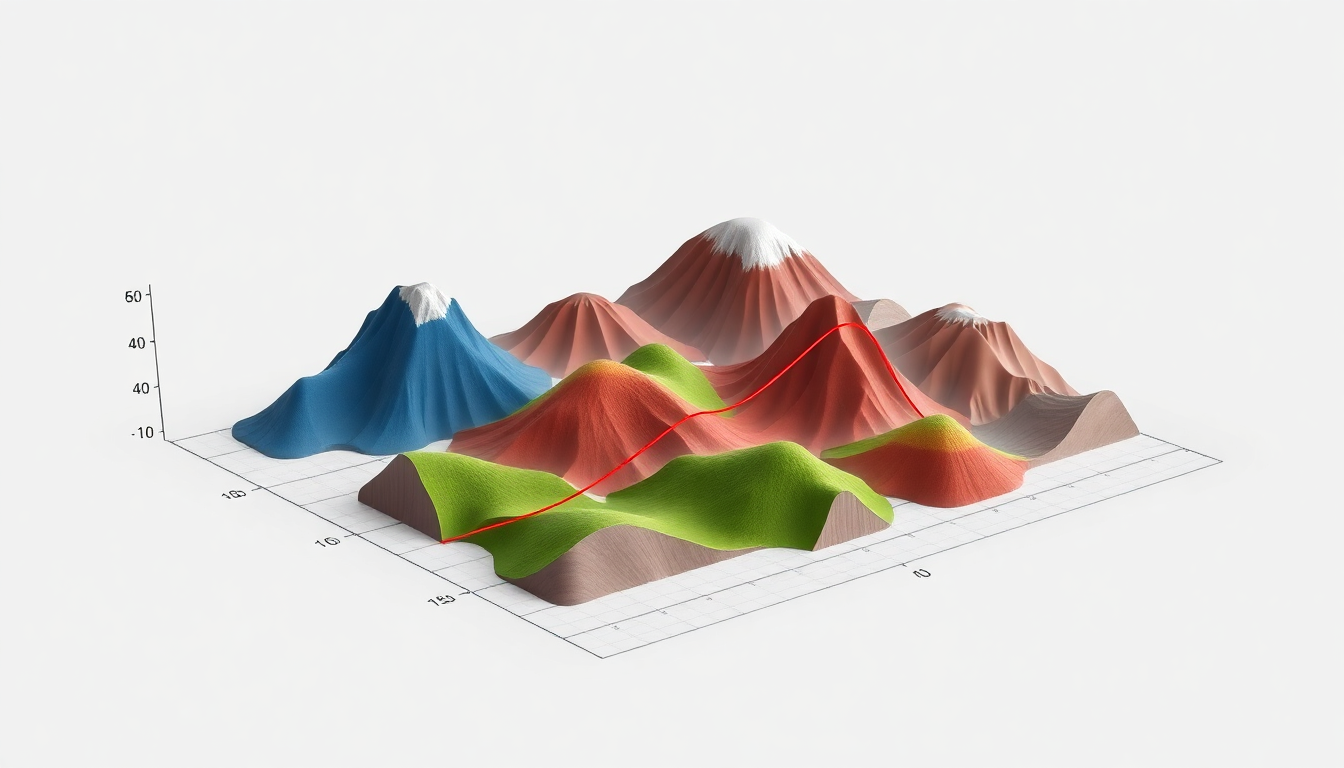

Common Regression Loss Functions

- Mean Squared Error (MSE): Squares differences, sensitive to outliers

- Mean Absolute Error (MAE): Robust to outliers, less sensitive than MSE

- Huber Loss: Combines MSE and MAE benefits with delta threshold

- Used for continuous value prediction like house prices or temperatures

3

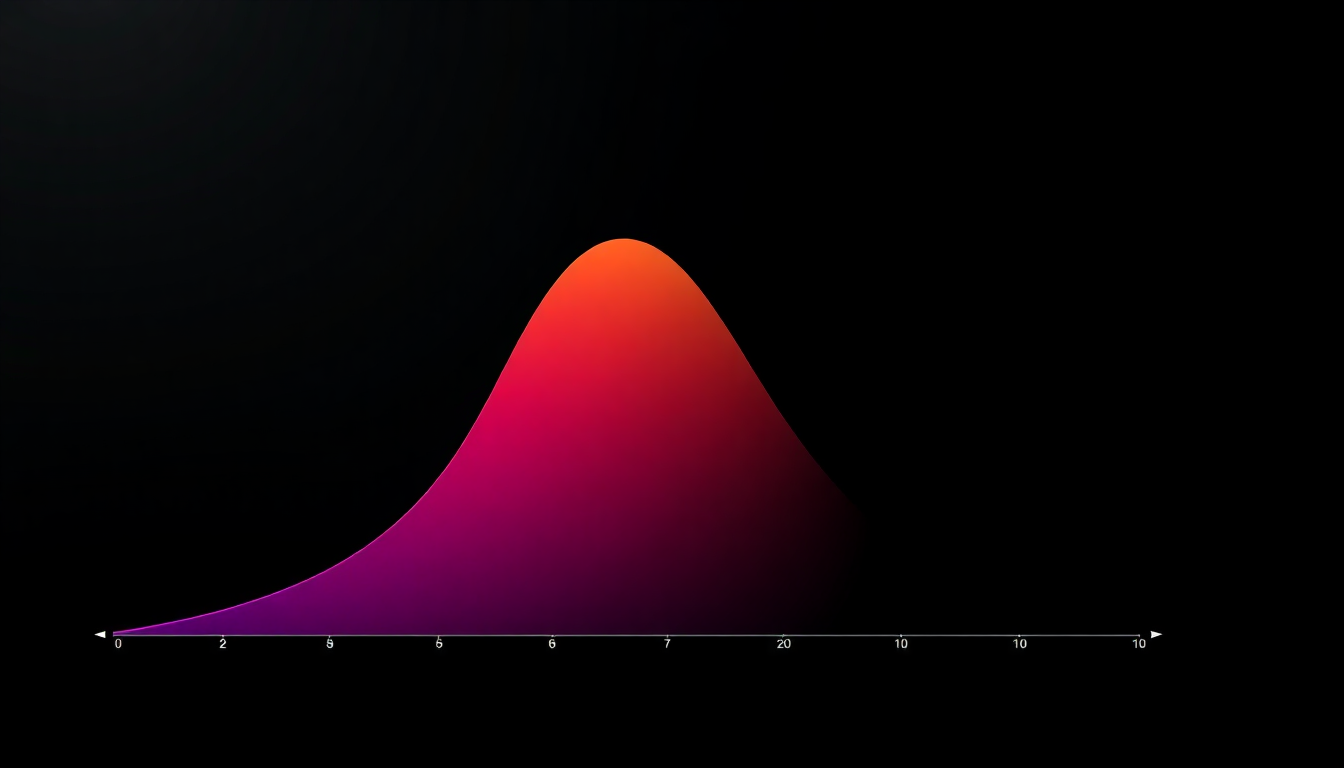

Classification Loss Functions

- Cross-Entropy: Measures probability distribution differences, common for classification

- Binary Cross-Entropy: Specialized for yes/no classification tasks

- Categorical Cross-Entropy: For multi-class problems with one-hot encoding

- KL Divergence: Measures difference between two probability distributions

4

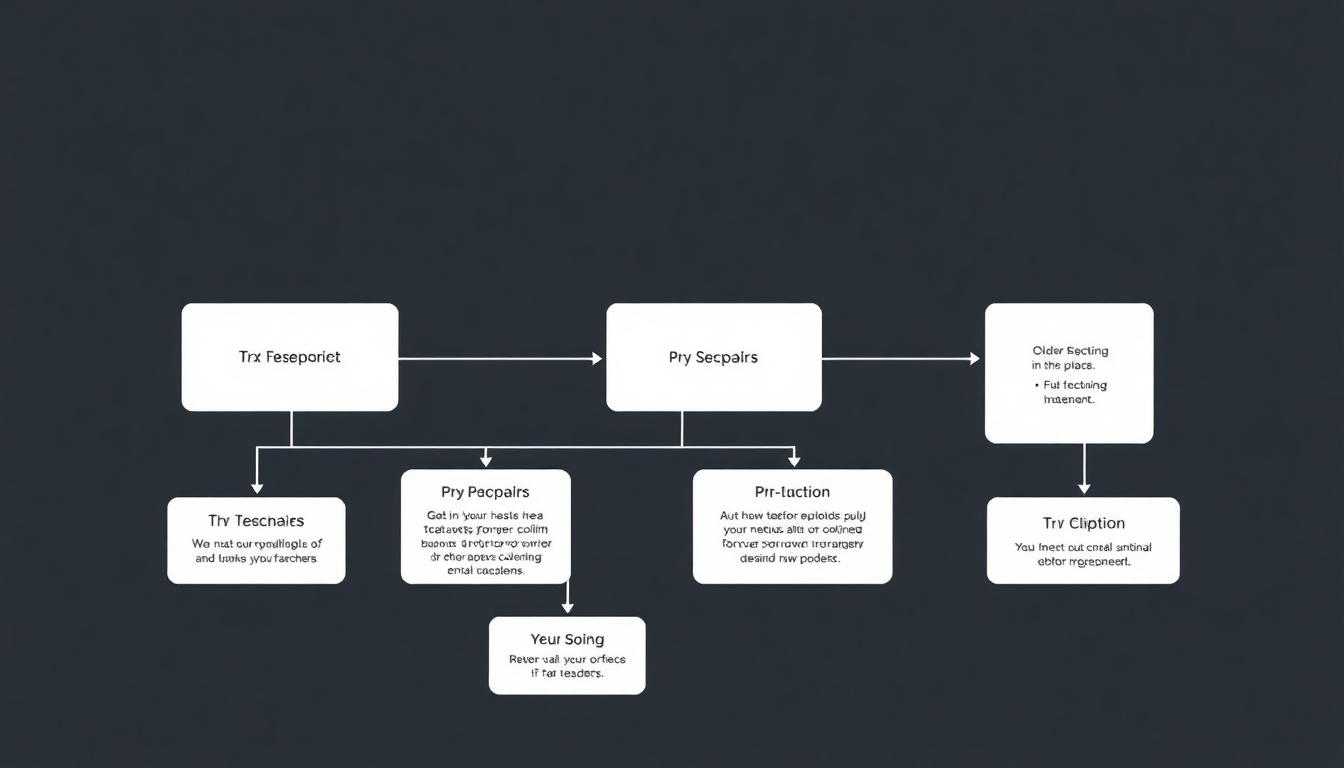

Specialized Loss Functions

- Hinge Loss: Used in SVM and some neural networks for classification

- Contrastive Loss: For siamese networks learning similarity metrics

- Triplet Loss: Learns embeddings by comparing anchor, positive, negative samples

- Custom loss functions can be designed for specific applications

5

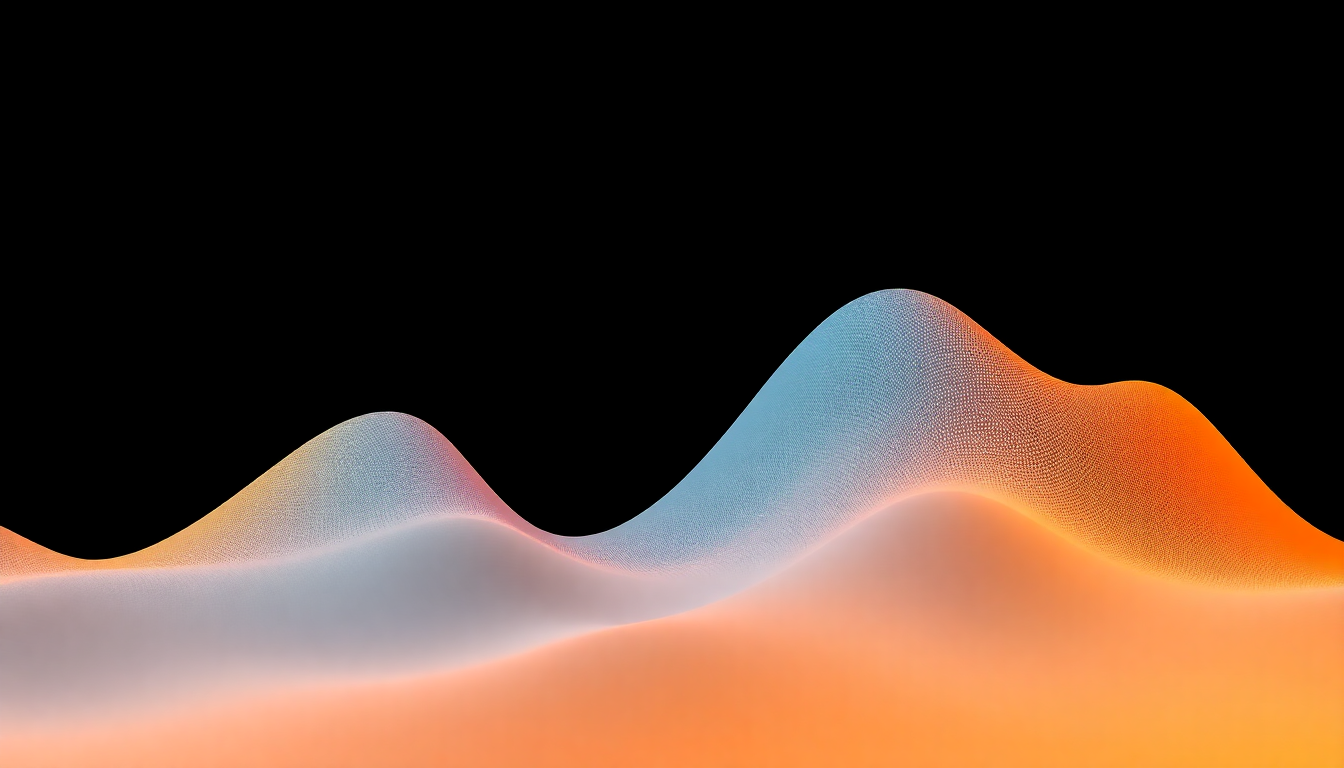

Choosing & Optimizing Loss

- Select based on problem type: regression, classification, ranking etc.

- Consider mathematical properties like convexity and differentiability

- Combine multiple losses for complex tasks (multi-task learning)

- Proper loss choice significantly impacts model training and performance

1 / 5